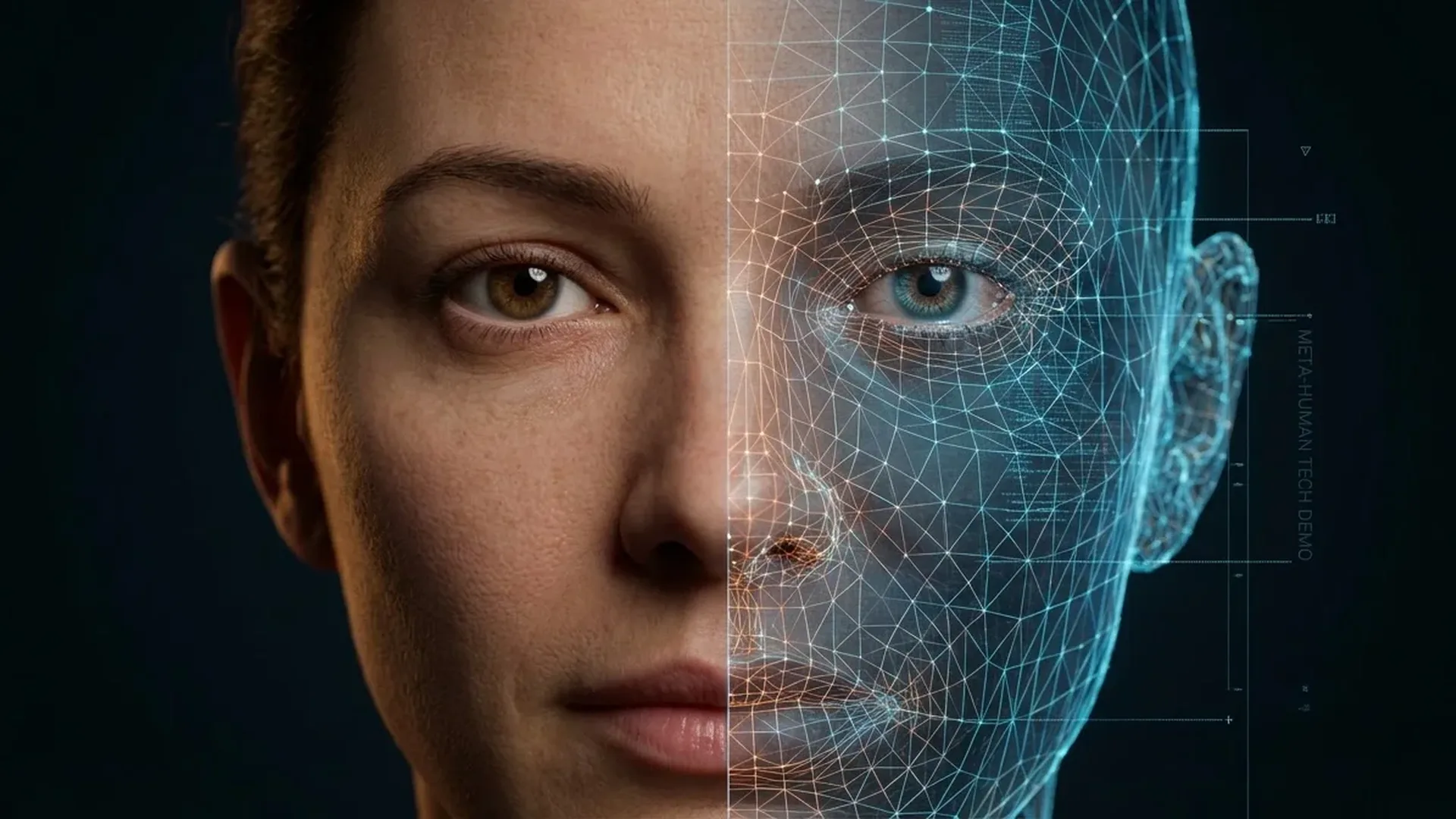

Digital humans are no longer science fiction. Thanks to artificial intelligence, tools like Epic Games' MetaHuman Creator, deepfake technology, and neural networks create digital faces so realistic they blur the line between real and virtual. In this article, we analyze how AI animation is transforming cinema, video games, advertising, and social media.

What Are Digital Humans?

A digital human (or virtual human) is a software-created character capable of reproducing realistic facial expressions, body movement, and speech. They can be replicas of real people or entirely fictional characters.

The Evolution of Digital Humans

The story begins in 1997, when the “Video Rewrite” program automated lip-sync replacement in video using machine learning. It was the first system to automatically connect speech audio with facial shape.

In 2016, Face2Face enabled real-time facial expression replacement using a simple camera, while in 2017 the “Synthesizing Obama” team created photorealistic video of the former president by synthesizing lip movements from a separate audio track. These projects laid the groundwork for modern AI animation.

MetaHuman Creator: Digital Characters in Minutes

Epic Games pioneers digital human creation through the MetaHuman Creator, a cloud-based tool that enables photorealistic character creation in minutes instead of months. Built on Unreal Engine 5, it uses Nanite (virtualized geometry) and Lumen (dynamic global illumination) technologies for realistic real-time results.

MetaHumans feature:

- Full facial animation with dozens of blendshapes for every facial muscle

- Realistic skin, hair, and eye reproduction through physically-based rendering

- Skeletal animation with lifelike body movement

- Motion capture integration for precise human movement replication

- Real-time rendering for gaming, VR, and virtual production

Virtual Production: The Cinema Revolution

Virtual production with LED volumes and real-time rendering fundamentally changed filmmaking. StageCraft technology, developed by Industrial Light & Magic in collaboration with Unreal Engine, allows directors to shoot scenes with virtual sets in real time.

Productions Using Virtual Production

StageCraft technology was used in productions like The Mandalorian, Westworld (Season 3), Fallout, and the animated series Barney's World. By October 2022, Epic Games was working with over 300 virtual sets worldwide. The first complete animated film made in Unreal Engine, “Gilgamesh,” was funded through Epic MegaGrants.

Disney enhanced their visual effects using high-resolution deepfake face-swapping at 1024x1024 pixels — four times larger than standard 256x256 models. This technology enables character de-aging (youthful versions of actors) or even “resurrecting” deceased actors — a topic that generated significant debate during the 2023 SAG-AFTRA strike.

Deepfakes: Technology and Applications

Deepfakes rely on autoencoders — neural networks that reduce an image to latent space and then reconstruct it. Using a universal encoder for facial feature recognition and a specialized decoder for the target face, the technology “wears” one face onto another body with impressive realism.

Key applications:

- Cinema: Actor de-aging, resurrecting deceased actors (Disney, Lucasfilm)

- Entertainment: Virtual concerts with ABBA Voyage and Kiss in collaboration with ILM

- Commercial: Synthesia creates training videos with AI avatars

- AI actors: In May 2025, Dutch company Xicoia created Tilly Norwood, the first AI actress

- Music: Kendrick Lamar used deepfake in “The Heart Part 5” (2022) via Deep Voodoo

Virtual Influencers: Digital Faces on Social Media

Virtual influencers — digital characters with social media presence — are an emerging phenomenon. Lil Miquela, an entirely digital influencer, has millions of Instagram followers. Brazil's Lu do Magalu functions simultaneously as a virtual assistant and brand ambassador.

Research shows that digital characters with human-like appearance achieve higher message credibility compared to anime-style designs. However, the “uncanny valley” remains an obstacle: characters that are “almost but not quite” realistic trigger aversion responses.

"We see the virtual human as more than a useful artifact. We see it as a tool for understanding ourselves."

— Perceiving Systems, Max Planck Institute for Intelligent SystemsRisks and Deepfake Detection

The same technology creating impressive digital humans is also used for malicious purposes. In 2024, over half of documented identity fraud cases involved AI-generated forgeries. Engineering firm Arup lost $25 million in a deepfake video call scam in Hong Kong.

Countermeasures and detection:

- DARPA MediFor/SemaFor: US military programs for detecting manipulated media

- Microsoft Video Authenticator: Real-time deepfake detection tool

- Eye reflection analysis: University of Buffalo researchers identify deepfakes through inconsistencies in light reflections

- Facebook DFDC: The Deepfake Detection Challenge with 4,000+ videos for training detection algorithms

Legislation and Ethical Issues

Deepfake regulation is evolving rapidly:

- EU AI Act (2024): AI risk categorization — deepfakes fall under “specific/limited risk” with transparency obligations

- USA: California passed laws against sexual deepfakes (AB-602) and political deepfakes (AB-730)

- China: Deep Synthesis Provisions — mandatory disclosure of AI-generated content since 2023

- SAG-AFTRA 2023: The actors' strike focused on protecting digital likeness

The Future: What's Coming?

Digital human evolution is accelerating:

- Unreal Engine 6, expected in 2-3 years, promises even more realistic digital humans

- Unity's acquisition of Weta FX brings cinema-quality effects to real-time

- AI-generated actors like Tilly Norwood open a new era for the entertainment industry

- Real-time voice synthesis combined with facial animation will make digital characters indistinguishable

- Interactive AI drama — digital actors that respond in real-time to the viewer — is approaching

Conclusion

Digital humans stand at the crossroads of technology, art, and ethics. From MetaHumans to deepfakes and virtual influencers, AI animation is transforming how we create and consume visual content. The challenge isn't just technological — it's social: how do we maintain trust in a world where our eyes are no longer enough to distinguish the real from the artificial.