📖 Read more: Sandbox Cities: Technology Testing Zones

⚖️ The EU AI Act: The World's First AI Law

In August 2024, the European Union enacted the EU AI Act (Regulation EU 2024/1689) — the first comprehensive legal framework for AI worldwide. The Regulation is based on an approach of 4 risk levels — from minimal to unacceptable. The goal is clear: AI systems must be trustworthy, safe, and respectful of fundamental rights.

🚫 What the EU AI Act Prohibits

Since February 2025, eight AI practices are now illegal in the EU:

- Social scoring — the Chinese-style model of rating citizens is unthinkable in Europe

- Emotion recognition in workplaces and educational institutions

- Biometric categorization based on protected characteristics (race, religion, sexual orientation)

- Untargeted scraping of faces from the internet or CCTV for facial recognition databases

- Predicting individual criminality based on algorithms

- Deceptive manipulation through AI that exploits vulnerable groups

For high-risk AI — such as systems used in hiring, credit scoring, justice, and critical infrastructure — the Regulation imposes strict obligations: risk assessment, high-quality datasets, human oversight, traceability, and cybersecurity. These rules take effect in August 2026-2027.

📖 Read more: 15-Minute Cities: Everything Within Walking Distance

📋 Algorithm Liability: Who Pays?

Beyond the AI Act, the European Commission proposed in September 2022 the AI Liability Directive (AILD) — a Directive aimed at adapting civil liability rules for the AI era. The central problem? When an AI causes harm, the victim struggles to prove who is at fault — because algorithms are often “black boxes.”

The European Commission recognized that existing laws are inadequate for the challenges AI poses. The AILD aims to facilitate proof of damage for citizens, ensuring that those harmed by AI systems will have the same level of protection as those harmed by other technologies.

In practice, this means: if an AI system denies you a loan, rejects you in hiring, or causes material damage, you don't need to prove how the algorithm works internally. The burden of proof shifts to the AI provider.

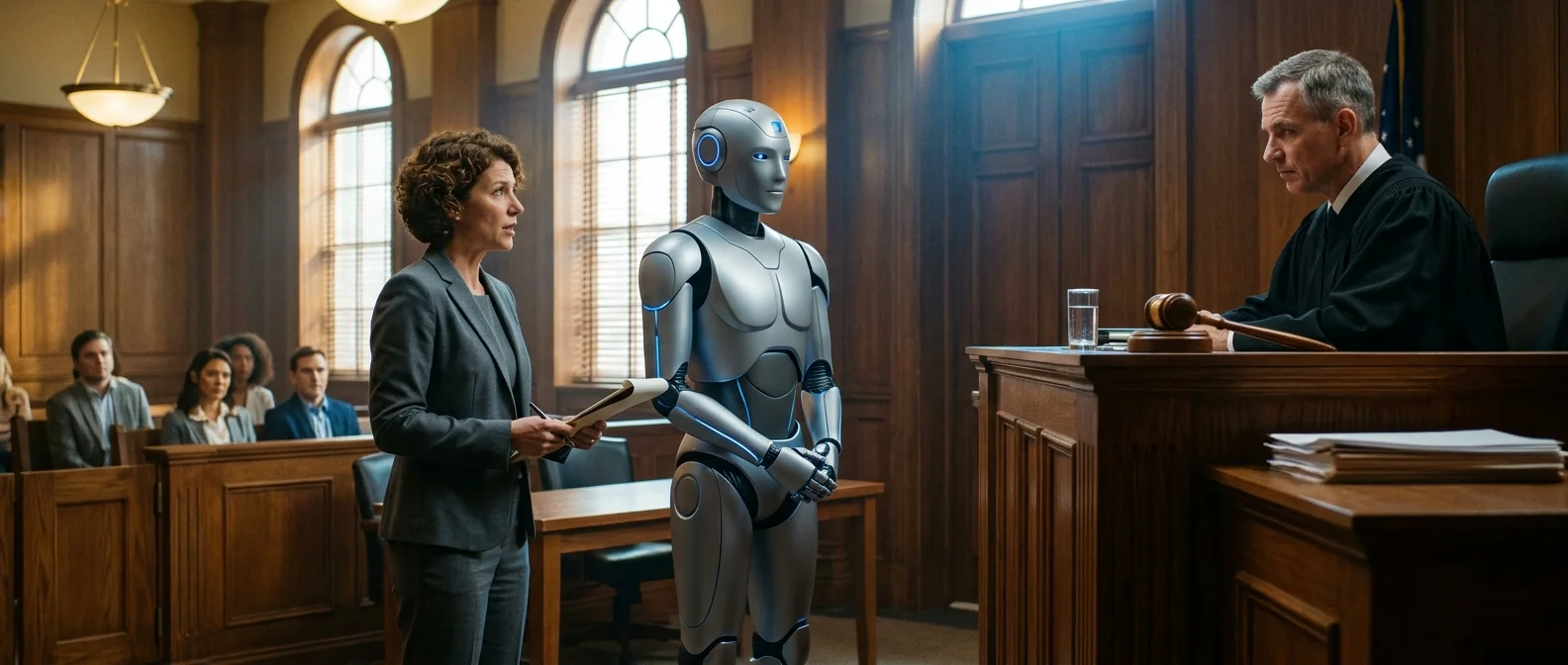

🤖 Electronic Personhood: Rights for Robots?

In 2017, the European Parliament voted on a resolution proposing the creation of "electronic personhood" for the most advanced robots. The idea provoked strong backlash: over 150 experts signed an open letter against the proposal, arguing it would undermine the liability of manufacturers.

📖 Read more: 3D Organ Printing: Transplants in Hours

The question remains open: if an AI system can make autonomous decisions that affect lives, should it have some form of legal standing? The majority of legal scholars and ethicists answer no — at least not yet. Liability must remain with the humans who design, develop, and deploy AI systems.

🌍 Who Controls AI Globally?

The EU isn't alone. Oversight of the AI Act is exercised by three bodies: the European AI Board (Member State representatives), the Scientific Panel (independent experts), and the Advisory Forum (stakeholders). The AI Office within the European Commission oversees implementation and monitors the most powerful general-purpose AI models (GPAI), such as GPT and Gemini.

At national level, each Member State has designated market surveillance authorities for compliance enforcement. Additionally, fundamental rights protection authorities gain special powers to request information and cooperation from AI providers in cases of serious incidents.

📖 Read more: AI Companionship: Human-Robot Relationships 2035

🔮 What Comes Next

In August 2026, the rules for high-risk AI and transparency obligations will take effect. This means every chatbot will need to declare it's a machine, deepfakes will need to be labeled, and generative AI providers will need to ensure their content is identifiable as AI-generated.

The real question isn't whether robots need rights — but whether we have rights against them. The right to know when we're talking to a machine. The right to challenge an algorithm's decision. The right not to be surveilled, scored, or discriminated against. And these rights, for the first time, now have a legal foundation.