A dime-sized chip. 200,000 neurons. The legendary Doom. These three elements combined in March 2026 to prove that science fiction sometimes isn't as fictional as we think. Australian company Cortical Labs put human brain cells to work playing one of the most famous games of all time — and they learned.

This isn't Cortical Labs' first rodeo with biological computers. Back in 2021, they trained 800,000 neurons to play Pong — the classic two-dimensional ping-pong game. But Doom? That's an entirely different level of complexity.

🧬 How the Neural Chip Works

They didn't harvest these neurons from brains. Blood or skin samples get converted into stem cells, which then generate an unlimited supply of neural cells. As Brett Kagan, Cortical Labs' chief scientific officer, explains: "You can take a small piece of blood or skin and create an unlimited supply of neural cells."

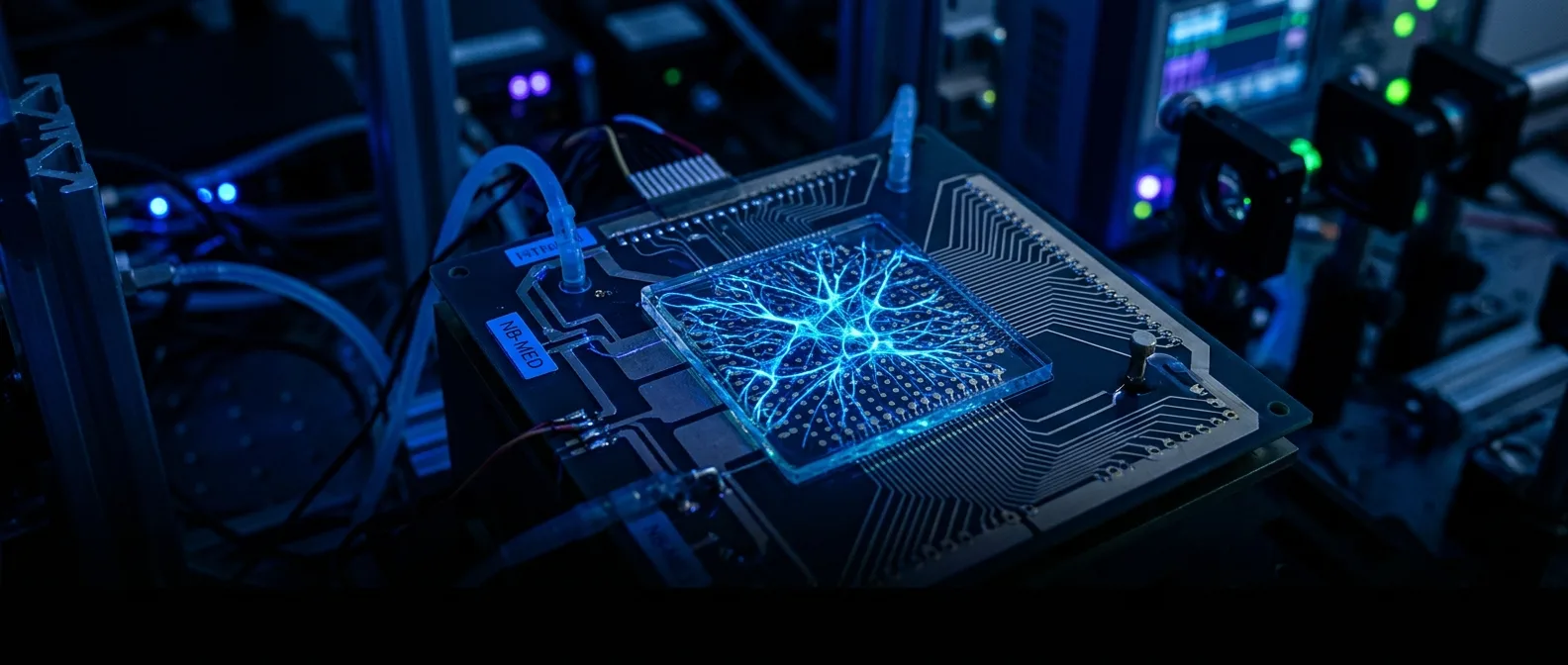

The CL-1 chip is essentially life support for neurons. Cells grow on top of microelectrodes that can send and receive electrical signals. Communication happens through electricity — "the common language between biology and silicon," as Kagan describes it.

From Pixels to Neural Signals

The most impressive part isn't that the cells played Doom. It's how they learned to play without eyes. Programmer Sean Cole managed to translate the game's visual data into electrical patterns the neurons could "see." And he did it in one week, using Python.

⚡ The "Chaos as Punishment" Method

How do you motivate a cell to do something specific? You can't tell it "kill the demon." Cortical Labs' team relied on neuroscientist Karl Friston's free energy principle.

"If I reach for an empty drink can and successfully predict the outcomes of my actions, that's a world I can live in. But if I reach for it and sometimes it becomes a chicken and sometimes fireworks, that world would be impossible to live in."

Brett Kagan, Cortical Labs

The logic is simple: neural systems want to predict their environment. Wrong moves produce white noise — unpredictable signals. Right moves produce structured, predictable signals. So cells learn to avoid chaos. Chaos is punishment, order is reward.

Why Doom and Not Something Else?

Doom has become the unofficial "compatibility test" for every new technology. It's been ported to everything — from bacterial guts and pregnancy tests to blockchains and PDFs. But this isn't just a gimmick. Doom has three-dimensional navigation, enemies, corridors, and lots of things trying to kill you. It's complex enough to test a system's capabilities.

🎯 What This Means for the Future

The performance? Not impressive. The neurons play better than random clicks, but much worse than an average player. However, they learn faster than traditional silicon-based AI systems.

The interest isn't current performance, but efficiency. The human brain consumes only 20 watts — less than a dim light bulb. To create similar computational power with silicon systems, you'd need at least a million times more energy.

Real-World Applications

Yoshikatsu Hayashi from the University of Reading explains that playing Doom "is like a simpler version of controlling an entire arm." His team tests similar systems for controlling robotic hands using biological computers made from hydrogel.

Cortical Labs sees two main application areas. First, medicine: "93 to 99 percent of clinical trials in the neuropsychiatric space fail," says Kagan. Many of these drugs get tested on cells in dishes, but brain cells aren't designed to sit in an information vacuum. Second, computing: biological neurons have at least third-order complexity, while silicon transistors have first-order — binary states, 0 and 1.

🤖 The Big Energy Question

As AI "eats" more and more electricity, organoids (three-dimensional brain organoids) start looking like a logical solution. Feng Guo from Indiana University has created "Brainoware" — a system that uses three-dimensional brain organoids for computations.

Neuromorphic Architecture

Mimicking brain structure for more efficient computations

Energy Efficiency

Millions of times less consumption than conventional AI systems

Hybrid Systems

Combining biological and silicon components for optimal performance

Not Ready for Mass Market Yet

Kagan is realistic: "A pocket calculator will beat me at division any day. But the best AI algorithm isn't as good as going to someone else's house and figuring out how to make tea."

Don't expect a personal computer running on brain-in-a-jar anytime soon. There's still plenty of research to be done. But as Kagan says: "You move from science fiction to science when you can work on the problem."

🔬 The Next Steps

The hackathon with Stanford University showed something interesting: when they combined the neurons with a regular learning algorithm, the hybrid system outperformed the algorithm alone. This suggests that biological cells actually contributed to the learning process.

Steve Furber from the University of Manchester agrees that Doom is a significant leap from Pong, but there's still much we don't understand. How do neurons know what's expected of them? How can they "see" the screen without eyes?

"What's exciting here isn't just that a biological system can play Doom, but that it can handle complexity, uncertainty, and real-time decision-making."

Andrew Adamatzky, University of the West of England

The Python Bridge

What makes this development particularly significant is accessibility. While Pong required years of painstaking scientific effort, Doom happened in days by a developer with relatively little biology experience. Cortical Labs developed an interface that makes programming these chips easier using Python.

A few years ago, biological computing had only one published Pong game to its name. Now it has a commercial platform, an API developers can connect to, and a video of neurons playing Doom — badly, but learning.

💭 The Philosophical Dimension

What does it mean for a world where machines think with human cells? Kagan warns against anthropomorphizing: "This isn't an animal or human or something as complex as an insect. It's a system."

But that's where the beauty lies. They're not trying to create artificial consciousness or copy the human brain. They're using biology as material that can process information in ways we can't recreate in silicon.

As 2026 progresses, it seems the line between biology and technology is blurring more and more. And maybe that's exactly what we need to tackle the energy challenges of next-generation AI systems. When traditional data centers consume as much electricity as small countries, sometimes the solution lies in returning to nature — with a technological twist.