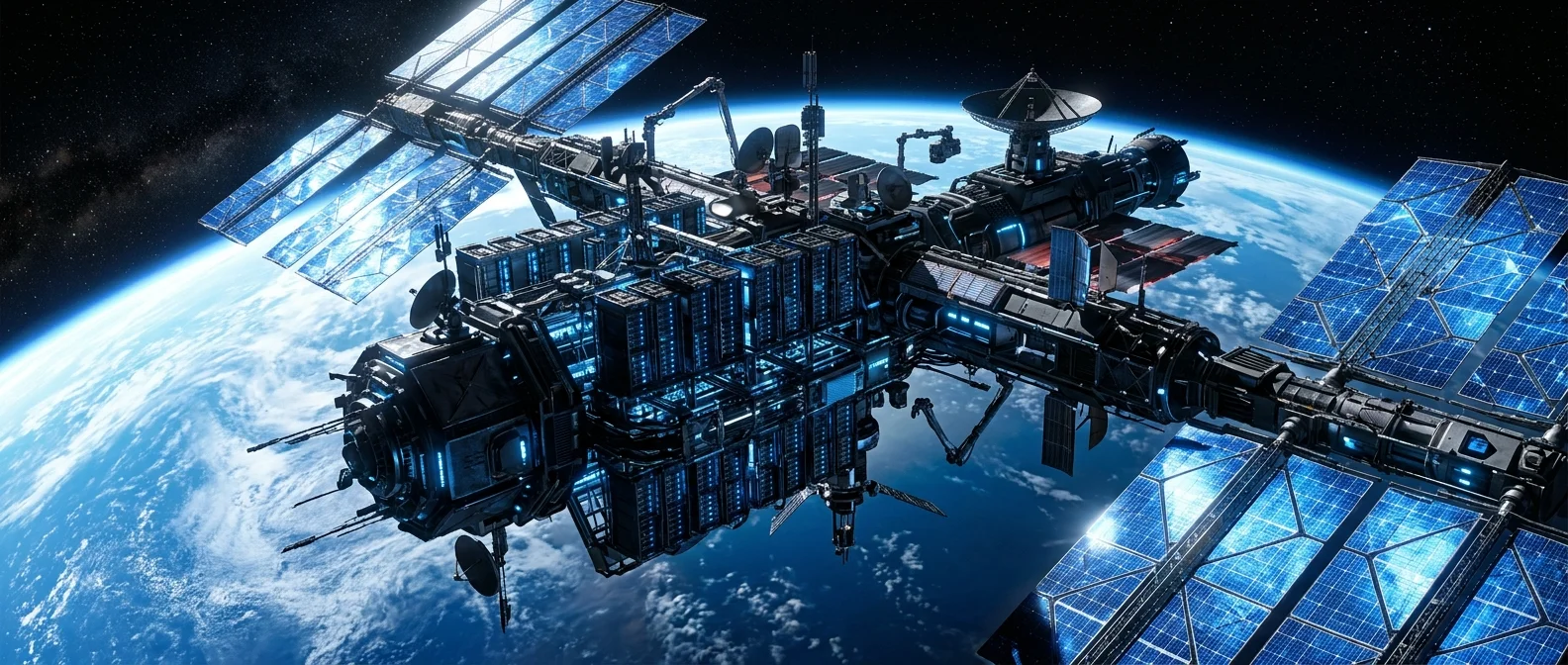

In November 2025, an NVIDIA H100 GPU entered orbit around Earth. It wasn't an experiment — it was the first satellite data center in history. A few weeks later, it trained an AI model in space. The race for orbital data centers hasn't started — it's already underway.

📖 Read more: Greece in Space: The First National Mission

🖥️ Why Move Computers to Space

The question sounds absurd. Why send servers up there when you can build another data center in Virginia? The answer lies in three very terrestrial problems.

Energy. Data centers already consume roughly 2-3% of global electricity, and AI is pushing that figure much higher. Training a large language model (LLM) requires gigawatt-hours. In low orbit, the sun shines nearly continuously — with solar panels that don't need batteries, since energy is directly available.

Cooling. About 40% of a data center's energy cost goes to cooling. In space, radiative cooling happens naturally — equipment radiates heat into the vacuum, with no fans, no water, no air conditioning.

Permitting. Building a new gigawatt-class data center on Earth takes 3-5 years of permits, environmental studies, and grid connections. In space, those constraints don't exist.

🛰️ Starcloud: The First GPU in Orbit

Starcloud (formerly Lumen Orbit) made history. In November 2025, it launched Starcloud-1 carrying the first NVIDIA H100 GPU into orbit — the most powerful AI processor ever placed in space.

In December, Starcloud-1 achieved two world firsts: it ran a version of Gemini (Google DeepMind) in space and trained an LLM — Andrej Karpathy's nanoGPT. The first time artificial intelligence was trained outside Earth.

"NanoGPT — the first LLM to train and inference in space. It begins."

— Andrej Karpathy, OpenAI co-founder, nanoGPT creatorTech leaders took notice. Eric Schmidt (former Google CEO) commented: “This is a seriously cool achievement.” Demis Hassabis (Google DeepMind CEO) said: “First LLM contact from space using our Gemma models!” Starcloud isn't a garage startup — it's backed by NVIDIA, Y Combinator, Sequoia, a16z, In-Q-Tel (the CIA's venture capital fund), and NFX.

The company is based in Redmond, Washington, and was founded by three engineers with serious credentials: Philip Johnston (Harvard, Wharton, former McKinsey), Ezra Feilden (PhD Imperial College, ex-Airbus Defense & Space, worked on NASA's Lunar Pathfinder), and Adi Oltean (ex-SpaceX Starlink, 20 years at Microsoft, 25+ patents). The roadmap includes four satellite generations: Starcloud-1 (already orbiting), Starcloud-2, Starcloud-3, and Starcloud-4 — targeting gigawatt-scale computing.

🏗️ Axiom Space: Data Centers on the International Space Station

Axiom Space, known primarily for private astronaut missions, entered the field aggressively. And started earlier than most.

In 2022, on the Ax-1 mission, they launched an AWS Snowcone device to the ISS — performing the first commercial AI inference in orbit. In fall 2025, they deployed AxDCU-1 (Data Center Unit-1), a prototype running Red Hat Device Edge with cloud computing, AI/ML, data fusion, and cybersecurity capabilities.

On January 11, 2026, the first two orbital data center (ODC) nodes launched to low-Earth orbit. Equipped with Optical Inter-Satellite Links (OISLs) capable of 2.5 GB/s, these nodes don't just send data to Earth. They process it on-site — image filtering, pattern detection, compression, AI inferencing — and transmit only what's needed.

🔑 How does an orbital data center work?

A satellite collects raw data (images, telemetry). Instead of sending everything to Earth, it forwards it via optical link to the nearest ODC node. The node runs AI/ML models, filters, compresses, and sends only useful results to Earth. If connectivity drops, the node operates autonomously — storing data, making local decisions, triggering alerts.

Axiom Space secured $350 million in financing in February 2026. The goal by 2030: expansion from kilowatts to megawatts of processing power in orbit — using commercial off-the-shelf hardware, containerized operating systems, and optical relay networks.

📖 Read more: Asteroid Mining: Trillions in Space

⚡ The Advantages — And the Obstacles

The advantages are clear: free solar energy without batteries, free cooling without water, zero terrestrial footprint, and scalability without bureaucracy. No city will complain that a data center in space is “using our water” or “heating up the neighborhood.”

But the physics work against you in four ways.

Launch cost. Despite reductions thanks to SpaceX (Falcon 9 costs ~$2,700/kg to LEO), transporting hundreds of tons of equipment remains expensive. Starship promises costs below $100/kg — if it becomes fully reusable, everything changes.

Latency. Low-Earth orbit (LEO) means ~4-8ms signal delay — acceptable for batch AI training but not for real-time applications. Orbital data centers are ideally suited for workloads that don't require instant response: model training, satellite image analysis, scientific computing.

Radiation. Cosmic radiation causes bit flips in memory and chip degradation. Radiation-hardened hardware or algorithmic error correction solutions are required — adding cost and reducing performance compared to terrestrial data centers.

Maintenance. If a server fails in Scottsdale, you send a technician. If one fails in orbit? Axiom Space plans module replacement via resupply missions — but that's neither cheap nor fast.

🌐 Who Will Use Orbital Computing

The first customers won't be Netflix or Spotify. They'll be military and governments — and that's no coincidence.

Axiom Space designed its ODC nodes to comply with Space Development Agency (Tranche 1) optical communication standards and to interoperate with national security networks. Digital sovereignty — a country's ability to process its data without passing through foreign servers — is becoming a central selling point.

After the military, Earth observation companies follow. Today, satellites capture petabytes of images and download them to Earth for analysis. With orbital computing, analysis happens up there. Only results come down — saving bandwidth, time, and money.

And then? AI training. The idea that someday you'll train a foundation model on orbital GPU clusters, powered entirely by solar energy, moved from theory to hardware. Starcloud-1 proved the concept works. Now comes the race to scale.

🔮 Where We're Headed

Starcloud is preparing next-generation satellites (Starcloud-2 through 4) targeting gigawatt-scale computing in space. Axiom Space aims for a network of megawatt-class nodes by 2030. Microsoft Azure Space is already researching orbital cloud computing. Amazon (Project Kuiper) hasn't announced orbital compute, but the AWS Snowcone on the ISS was a clear signal.

When Starship drops launch costs below $100/kg, the math flips: orbiting a server rack becomes cheaper than wiring a new data center to the grid.

Terrestrial data centers aren't getting replaced. But a significant portion of AI computing could migrate to orbit within a decade. The idea sounds crazy — exactly as crazy as the idea of a reusable rocket sounded ten years ago.