NVIDIA's data centers consume as much electricity as small countries. As AI models grow, electronic circuitry hits its physical limits. The solution? Replace electrons with photons. Photonic AI chips promise 100x faster processing at a fraction of the energy cost.

📖 Read more: octopus-cloaking-technology

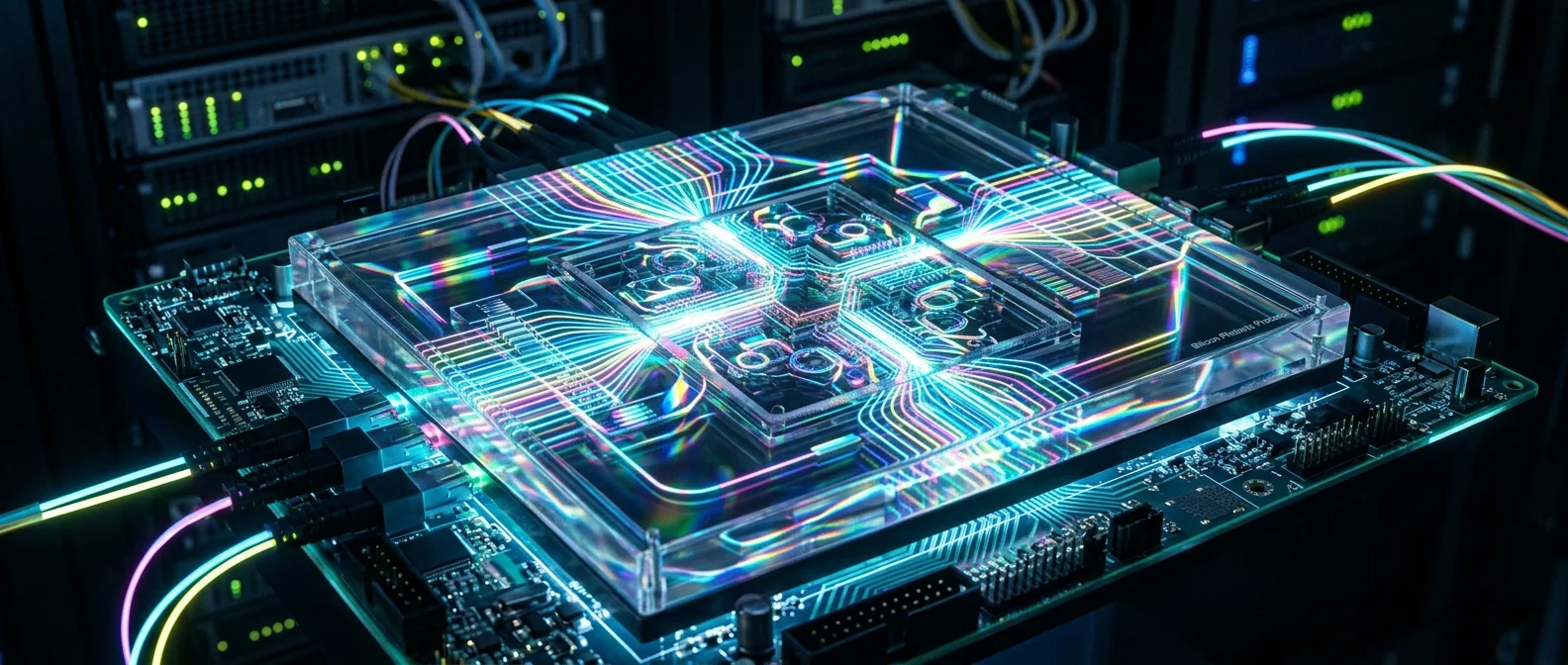

What Are Photonic Chips

Traditional electronic chips use electrons for computation. Electrons travel through conductors, encounter resistance, generate heat. Photonic chips replace electrons with photons — particles of light. Photons have no mass, produce no heat, don't interfere with each other, and travel at the speed of light.

The core principle: matrix multiplications — the fundamental operation of neural networks — can be performed optically using Mach-Zehnder interferometers. Instead of thousands of clock cycles, multiplication happens in a single pass of light — almost instantaneously.

Why it matters: Training GPT-4 cost an estimated ~$100 million in energy and compute. If photonic chips reduce consumption by 90%, training an equivalent model would cost ~$10 million — democratizing access to AI.

📖 Read more: Quantum Computers: What Will They Change?

The Major Players

Lightmatter

Founded at MIT (2017) by Nicholas Harris and Darius Bunandar. Their product, Envise, is a photonic processor for AI inference. But the real revolution is Passage — a 3D photonic interconnect replacing electrical cables between chips in data centers. Funding: $300+ million (Viking Global Investors, GV/Google). Valuation: $4.4 billion (2024).

Lightelligence

MIT spin-off with the Hummingbird photonic chip. Specializing in optimization problems (logistics, routing). Planning inference chips for edge devices — small, energy-efficient units outside data centers.

IPronics (Spain)

European competitor developing programmable photonic processors that can be dynamically reconfigured — a photonic FPGA. Applications in 5G/6G telecommunications and AI.

📖 Read more: Robotaxis: How We'll Get Around in 2035

Why Now

Timing is everything. Moore's Law is slowing down — transistors are approaching atomic scales (2 nm). Energy demand for AI is skyrocketing: the IEA estimates data centers will consume over 1,000 TWh by 2026 — double 2022 levels.

Meanwhile, silicon photonics technology has matured. Photonic components can now be manufactured on the same production lines (foundries) as electronic chips — impossible just 10 years ago. TSMC and GlobalFoundries now offer silicon photonics processes.

"We're not replacing electronic chips. We're connecting them with light. Putting photons where electrons fail."

— Nicholas Harris, CEO LightmatterChallenges

Photonic chips aren't a panacea. Arithmetic precision (bit precision) lags — electronic chips operate at FP32/FP16, while photonics today reach ~4-8 bit effective precision. Sufficient for inference, insufficient for training certain models.

📖 Read more: Solid-State Batteries: The Revolution

Manufacturing requires extreme precision — a waveguide must be fabricated at nanometer scale. Temperature affects optical components, requiring active thermal stabilization. Integration with existing software like CUDA and PyTorch will take years.

Where It Leads

The most realistic prediction: photonic chips won't replace electronics — they'll complement them. A hybrid model: electronic cores for general computation, photonic modules for AI inference and data center interconnects. The transition mirrors the CPU-to-GPU shift — CPUs didn't disappear, but GPUs came to dominate AI.

If Lightmatter, Lightelligence, and others succeed, the 2030s could see data centers consuming a tenth of today's energy, AI models 100 times larger, and processing speeds that seem unimaginable now. Electrons brought us this far. Now it's light's turn.