📖 Read more: Meta Quest 4: Everything We Know About the Next Generation

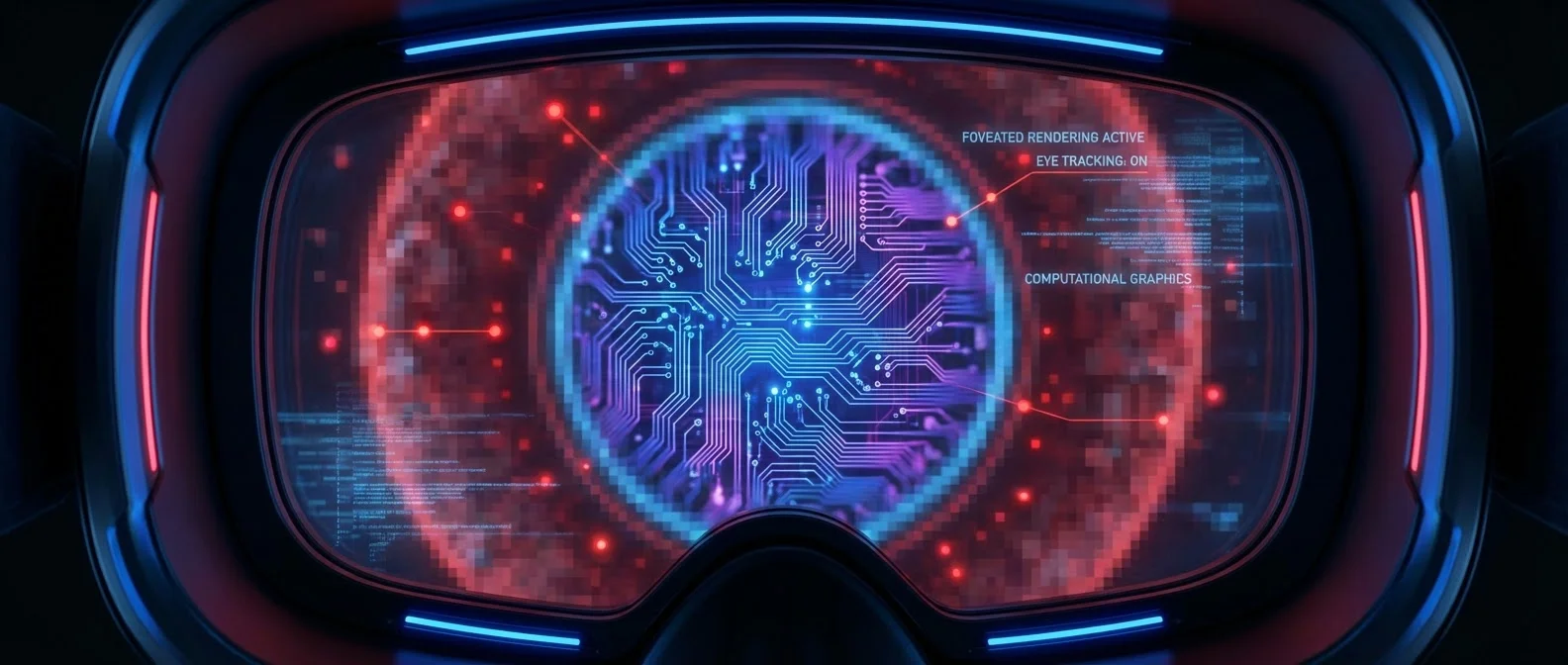

👁️ What Is Foveated Rendering

Foveated rendering is a graphics rendering technique that mimics the way the human eye actually works. Instead of rendering every pixel on the display at full resolution, it detects exactly where the user is looking and renders only that small area — typically 2-5 degrees around the gaze point — in high quality. The rest of the image is rendered at lower resolution, something the user virtually never notices due to the natural limitations of peripheral vision.

The name comes from the fovea centralis, a tiny depression at the center of the retina measuring just 1.5mm across, solely responsible for our sharp central vision. According to Wikipedia, the fovea contains exclusively cone cells packed at extraordinary density — roughly 147,000 per square millimeter — and although it covers less than 1% of the retina's surface area, it consumes more than 50% of the visual cortex in the brain. In other words, our brain dedicates enormous resources to a minuscule portion of our visual field — and VR headsets need to do exactly the opposite: stop wasting resources where they're not needed.

This technique comes in two main forms. The first is fixed foveated rendering, which doesn't use eye tracking but assumes the user is always looking roughly at the center of the lens — a reasonable assumption in many scenarios. The second, more sophisticated variant, is dynamic foveated rendering, which integrates eye tracking sensors to monitor in real-time exactly where the user is looking and adjusts the high-resolution zone accordingly.

🧠 How It Works

The core principle is straightforward: three concentric quality zones are created around the gaze point. The inner zone (foveal region) is rendered at full resolution — this corresponds to the sharpest area of the fovea, roughly 2 degrees of visual field or, to put it in practical terms, about the width of two thumbs at arm's length. The middle zone (parafoveal) is rendered at medium resolution — here, the human ability to distinguish fine detail drops significantly. Finally, the outer zone (peripheral) is rendered at the lowest resolution — our peripheral vision primarily perceives motion and rough shapes, not detail.

In a dynamic foveated rendering system, infrared LEDs embedded inside the headset emit invisible light toward the eyes. High-speed cameras — typically 120-250 Hz — capture the reflection from the pupil and cornea. Algorithms calculate the gaze vector in real-time and relay this information to the graphics engine, which reshapes the quality zones frame-by-frame. Total latency (eye-to-photon) is critical: if it exceeds 50-70 ms, the user begins to notice blurring in the peripheral field during rapid eye movements (saccades).

NVIDIA demonstrated at SIGGRAPH 2016 a foveated rendering method they claimed was “invisible” to users — meaning the transition between quality zones was so smooth that nobody could detect it. The technique relied on a partnership with SMI (SensoMotoric Instruments), which had already showcased a new 250 Hz eye tracking system for VR headsets at CES 2016. Qualcomm, for its part, integrated foveated rendering support (Adreno Foveation) into the Snapdragon 835 VRDK in 2017, bringing this capability to mobile VR chipsets.

"The fovea centralis sees only the central 2 degrees of the visual field, yet it consumes more than 50% of the visual cortex. Half the nerve fibers in the optic nerve carry information exclusively from the fovea."

🔬 The Biology Behind the Technology

To understand why foveated rendering is so effective, you need a brief dive into the biology of vision. The human retina is divided into three main zones: the fovea (diameter ~1.5mm), the parafovea (extending in a 1.25mm radius around the fovea), and the perifovea (radius 2.75mm). Beyond these lies the broader peripheral area.

The fovea contains 50 cones per 100 μm — extraordinarily densely packed in a hexagonal pattern. The parafovea still has high cone density but already lower acuity, while the perifovea drops to 12 cones per 100 μm. The practical consequence is striking: our ability to distinguish fine detail plummets just a few degrees away from the fixation point. Each cone at the foveal center connects nearly 1-to-1 with a ganglion cell, creating a ganglion-to-photoreceptor ratio of approximately 2.5:1 — unique in biology, guaranteeing exceptional spatial resolution.

It's worth noting that the fovea contains no rod cells, making it insensitive to dim light — that's why astronomers use “averted vision,” looking to the side to spot faint stars. This biological quirk means that in a VR environment, the need for pixel-perfect rendering is truly limited to a very small area. Based on average cone density calculations, the human eye requires about 655 PPI at 25cm (phone distance) and 96 PPI at 1.73m (TV distance) to avoid resolving individual pixels — but these values apply only to the fovea. The periphery gets by with far less.

🎮 Headsets Using Foveated Rendering

This technology is no longer experimental — it already ships in consumer headsets. Let's look at the most significant ones:

The HTC Vive Pro Eye (2019) was one of the first headsets with built-in eye tracking for foveated rendering, featuring Tobii's 120 Hz eye tracking sensors. The Meta Quest Pro (2022) followed with dynamic foveated rendering powered by infrared eye tracking sensors, noticeably improving image clarity without increasing GPU demands. The PlayStation VR2 (2023) integrated Tobii eye tracking technology directly into a consumer gaming console, making foveated rendering accessible to millions of PS5 players.

The Apple Vision Pro (2024) took it a step further: the entire visionOS user interface is built around eye tracking — you look at an element to select it, and foveated rendering runs continuously in the background. The most recent development came in November 2025 with the announcement of the Valve Steam Frame, which introduces a variant called “foveated streaming” — it uses eye tracking to dynamically vary the bitrate during wireless streaming, delivering high bitrate to the center of your gaze and lower bitrate to the periphery at the encoder level, NOT the software level.

Even without eye tracking, fixed foveated rendering delivers significant gains. In December 2019, Oculus added dynamic fixed foveated rendering support to the original Quest SDK, improving performance on standalone mobile VR. This approach simply renders lower resolution at the edges of the lens without knowing where the user is looking — but it works well enough because VR lenses inherently produce lower optical quality at the periphery anyway.

Headsets with Foveated Rendering

⚡ How Much GPU Does It Save

The numbers are impressive. According to Michael Abrash, chief scientist at Oculus/Meta, combining foveated rendering with sparse rendering and deep learning image reconstruction can reduce the number of rendered pixels by an order of magnitude (10x) compared to a full image. That means a headset could theoretically run 8K-per-eye quality graphics with GPU resources equivalent to a 4K render.

In practice, today's dynamic foveated rendering systems save 30-50% GPU workload, with the best implementations reaching 60%. The savings aren't limited to GPU cycles: fewer rendered pixels means lower battery consumption (critical for standalone headsets), lower operating temperatures, and the ability to use lighter, more efficient chips. Meta demonstrated these results through its DeepFovea project, which uses generative adversarial networks (GANs) to reconstruct full image quality from minimal rendered pixels in the periphery.

There's also the intriguing question: if we save 50% GPU, what happens to that “headroom”? In practice, developers can reinvest it in higher foveal zone resolution, more complex shaders, more realistic lighting (ray tracing), or higher frame rates — factors that improve both visual quality and the reduction of motion sickness.

🔮 The Future: AI, Retinal Displays, and Next-Gen Headsets

Foveated rendering technology is evolving rapidly, and in 2026 we've reached a tipping point. Artificial intelligence is playing an increasingly central role: Meta's DeepFovea uses deep learning to “imagine” what should appear in the periphery based on minimal information, producing an image that looks fully rendered but costs a fraction of the computational load. This approach — gaze-contingent rendering enhanced with AI — is considered the cornerstone technology for next-generation headsets.

Research in the field dates back to 1991, but only now — with eye tracking sensors fast and accurate enough, GPUs powerful enough for complex variable rate shading, and AI models smart enough for image reconstruction — have we reached the convergence of technologies that makes the real difference. Valve just proved it with the Steam Frame: the technique can even be applied to video encoding, extending the “render less where you're not looking” logic beyond the renderer into the networking layer.

Looking ahead, the trend is clear: every major VR headset in 2026-2027 will incorporate eye tracking as a core feature. This evolution isn't just about rendering — eye tracking also unlocks more natural UI interactions, better social presence through gaze representation in avatars, and new accessibility possibilities. Foveated rendering is the first, and perhaps most important, application of this technology — but it certainly won't be the last.

Key Takeaways

- The fovea centralis covers just 2° but uses 50%+ of the visual cortex

- Fixed foveated rendering works without eye tracking — assumes lens center

- Dynamic foveated rendering uses infrared eye tracking at 120-250 Hz

- GPU savings of 30-60%, theoretically up to 10x with AI reconstruction

- PSVR2, Quest Pro, Apple Vision Pro, Steam Frame: already shipping

- Meta's DeepFovea uses GANs to reconstruct peripheral imagery

- Foveated streaming (Valve) applies the principle at the video encoding layer