📖 Read more: Full Body Tracking: Moving Your Entire Body in VR

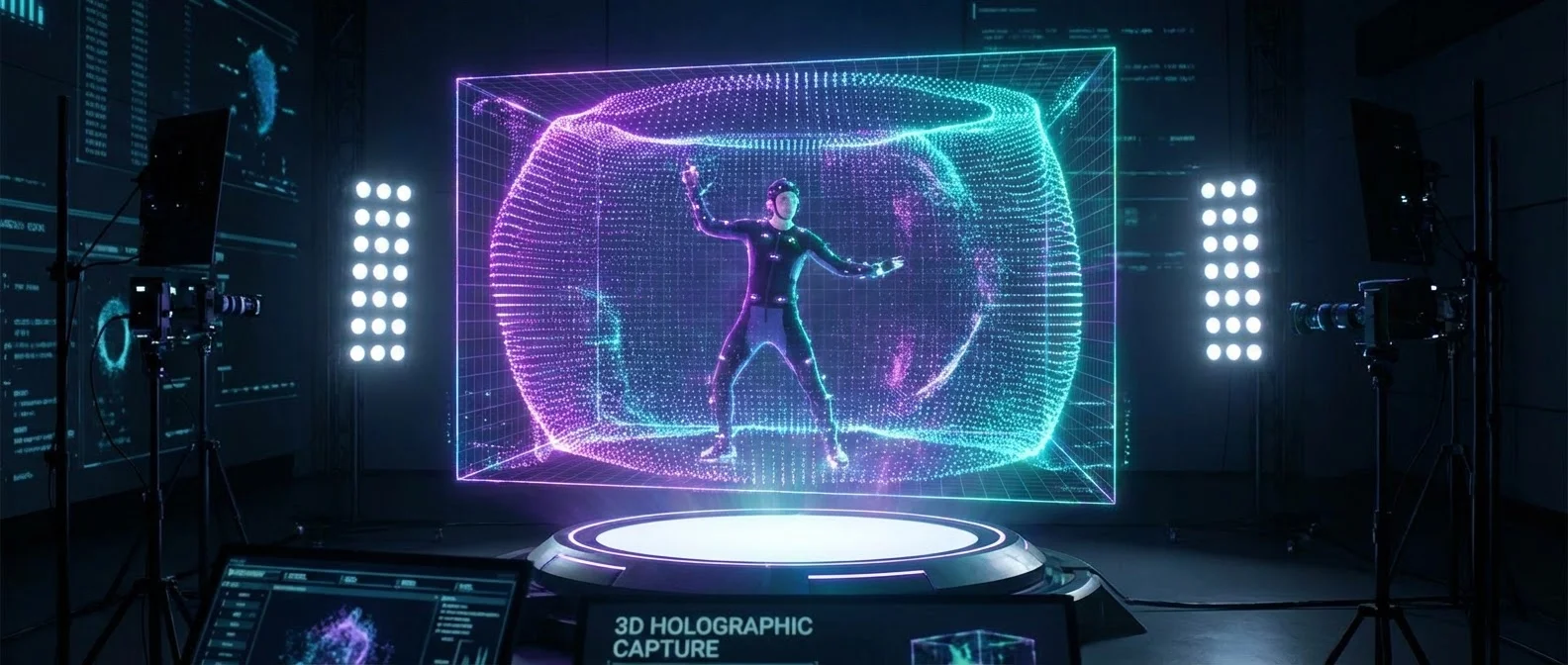

What Is Volumetric Video

Volumetric video is a capture technique that records three-dimensional spaces, locations, or human performances in full depth. Unlike traditional video, which stores only a two-dimensional frame, volumetric video preserves position, colour, and motion data across all three dimensions. The result can be played on flat screens, but truly shines when experienced through VR headsets, where viewers can navigate the scene with six degrees of freedom (6 DoF).

This technology blends elements from photogrammetry, computer graphics, LIDAR scanning, and machine learning. Its roots stretch back to early experiments reconstructing 3D models from footage, but only in recent years — thanks to the rise of consumer VR and breakthroughs like Neural Radiance Fields — has it become truly practical.

The History of Volumetric Capture

Recording content free from the constraints of a flat screen has inspired science fiction for decades — from the holograms of Star Wars to technologies that gradually evolved into reality. The iconic bullet time effect in The Matrix (1999), using dozens of synchronised cameras, was an early example of multi-view capture and is now considered a precursor to modern volumetric techniques.

In 2007, Radiohead used LIDAR scanning for their music video “House of Cards” — one of the earliest creative uses of volumetric capture. Director James Frost and media artist Aaron Koblin recorded point cloud performances of the singer's face. Point clouds, while representing dense samples in 3D space with position and colour, couldn't be rendered in real time at the time.

The major breakthrough came in 2010 when Microsoft released the Kinect, a consumer device that used structured light in the infrared spectrum to generate real-time 3D meshes. Although designed for gaming, the Kinect was quickly adopted as a general 3D capture device. Microsoft researchers built an entire capture stage with multiple cameras and algorithms, which evolved into the Microsoft Mixed Reality Capture Studio — used for the Blade Runner 2049 VR experience among other projects. Three studios now operate: in Redmond, WA; San Francisco, CA; and London.

Photogrammetry brought even greater fidelity: using dozens of synchronised cameras, triangulation and depth estimation algorithms create near-perfect replicas of real-world spaces. 4DViews installed the first volumetric video capture system in Tokyo in 2008, and later Intel, Samsung, and Microsoft built their own dedicated capture stages.

Workflows: Mesh vs Point Cloud

There are two main approaches to creating volumetric video, each with its own strengths and limitations.

Mesh-Based Workflow

The mesh-based approach generates traditional triangle meshes, similar to the geometry used in video games and visual effects. Data volumes are smaller, but the quantization of real-world data into lower-resolution forms limits detail. Photogrammetry serves as the foundation for static meshes, while performance capture is achieved through videogrammetry. The final result resembles a game pipeline: playback happens in a real-time engine, allowing interactive lighting changes and compositing of static and animated meshes.

Point-Based Workflow

The point-based approach represents data as points (or particles) in 3D space carrying colour and size attributes. Point clouds offer higher information density and greater resolution, but require enormous data rates. Current GPU hardware is optimised for mesh-based rendering, not points, making real-time display challenging. However, the lighting information is exceptionally accurate — even if it can't be changed dynamically. A key advantage: computer graphics can also be rendered as points, enabling a seamless blend of real and imagined elements.

📖 Read more: Haptic Gloves: VR Gloves That Let You Feel Touch

Neural Radiance Fields: The NeRF Revolution

Perhaps the most significant breakthrough in this field came with Neural Radiance Fields (NeRF), first introduced in 2020 by UC Berkeley researchers. A NeRF represents a scene as a continuous volumetric radiance function, parametrised by a deep neural network. Given a spatial location (x, y, z) and a viewing direction (θ, φ), the network predicts volume density and emitted radiance.

The process is remarkably straightforward at its core: dozens of photographs are taken of a scene from various angles along with camera positions. For each image, rays are traced through the scene to generate 3D points. A multi-layer perceptron (MLP) is trained via gradient descent until the model's generated image matches the real one. Since the entire process is fully differentiable, the error can be minimised automatically.

The original NeRF, however, had significant limitations: slow training (hours to days), a requirement for consistent lighting across input images, and an inability to render in real time. This spawned a wave of improvements that transformed NeRF from a research curiosity into a practical tool.

Key NeRF Advancements

From 2020 onward, a series of improvements fundamentally changed what was possible:

- Fourier Feature Mapping (2020): Addressed the spectral bias problem, enabling faster convergence on high-frequency details.

- NeRF in the Wild (2021): Google developed a method splitting the network into three separate MLPs — one for static volumetric radiance, one for lighting changes, and one for transient objects. This enabled training from mobile phone photos taken at different times of day.

- Mip-NeRF (2021): Improved sharpness across multiple viewing scales using conical frustums instead of individual rays, virtually eliminating aliasing.

- PlenOctrees (2021): Enabled real-time rendering of pre-trained NeRFs through octree subdivision — rendering 3,000× faster than the original NeRF.

- Instant NeRF (2022) — Nvidia: Using spatial hash functions and parallelised GPU architectures, this enabled real-time NeRF training — an improvement of orders of magnitude.

- Relighting (2021): Added new parameters to the MLP (normals, materials, visibility), allowing re-rendering under any lighting conditions without retraining.

3D Gaussian Splatting: The New King

If NeRF was the “revolution” in volumetric video, 3D Gaussian Splatting (3DGS) is the “evolution” that surpassed it in many areas. Presented in July 2023 by Inria researchers, it ignited enormous interest in the computer graphics community.

Rather than representing a scene as a volumetric function (like NeRF), 3DGS uses a sparse cloud of 3D Gaussians — each with position, covariance (ellipsoid shape), colour, and opacity. The process starts from a sparse point cloud (via Structure from Motion) and each point is converted into a Gaussian. Through stochastic gradient descent, the Gaussians are optimised until the image “splatted” onto the screen matches the training images.

The results are striking: a full 3DGS model trains in 35–45 minutes (versus 48 hours for Mip-NeRF360) and renders in real time, whilst NeRF needed roughly 10 seconds per frame. Quality is on par or slightly superior. The “4D Gaussian Splatting” extension adds a temporal component for dynamic scenes and moving objects — critical for volumetric performance capture of live actors.

NeRF vs 3D Gaussian Splatting: Comparison

Light Fields: The Science of Light

Beyond NeRF and Gaussian Splats, a third category of technology plays a significant role in volumetric video: light fields. A light field describes, at every point in space, the incoming light from all directions. The concept traces back to Michael Faraday, who first proposed that light should be interpreted as a field, and was formalised by Andrey Gershun in a landmark 1936 paper.

From a technological standpoint, light fields are captured using multiple cameras or specialised devices (plenoptic cameras), and the company Lytro — active since 2006 — created the first consumer light field cameras. Facebook/Meta used a similar approach in their Surround360 camera family for 360° footage with depth information. The ability to re-focus after capture and support for slightly stereoscopic viewing make light fields ideal for immersive VR content.

"True immersion in virtual reality won't come from more realistic graphics — it will come from the ability to move freely through real three-dimensional content."

📖 Read more: Meta Quest 3S: Buying Guide & Review 2026

Volumetric Video in VR and Beyond

The rise of consumer VR from 2016 — with devices like the Oculus Rift and HTC Vive — breathed new life into volumetric video. Stereoscopic viewing, head rotation, and room-scale movement allow immersion far beyond any previous medium. The photographic quality of captures, combined with interactivity, brings users closer to the “holy grail” of true virtual reality.

Today, volumetric video is already used in commercial applications: the Scenez app delivers virtual concerts on Meta Quest and Apple Vision Pro, while Apple developed Apple Immersive Video — a standard for high-fidelity spatial video. NeRF technology is already being used to create immersive VR content, and with Gaussian Splats, real-time rendering opens the door to live experiences — concerts, sporting events, museum tours.

Beyond VR, applications are multiplying: sport uses volumetric replays (Intel True View at Arsenal, Liverpool, and Manchester City stadiums), medicine leverages NeRF for reconstructing 3D CT scans from sparse X-ray samples, robotics exploits the ability to understand transparent objects, and architecture creates digital twins of buildings before physical construction even begins.

Challenges and Future Directions

Despite tremendous progress, significant challenges remain. Data rates are enormous: a full-resolution volumetric video can produce vast amounts of data per second. MPEG is working on volumetric data compression, but there's still no universal streaming standard. GPU memory is a bottleneck — Gaussian Splats can consume over 20 GB of VRAM during training.

Developing a new visual language for volumetric experiences is equally critical. Over 100+ years of cinema, the art of directing has matured — but in a fully interactive, 6 DoF world, traditional filmmaking techniques simply don't work. The community needs time to discover the “language” of this new medium, just as cinema needed decades after the introduction of sound.

Furthermore, existing video production pipelines aren't ready for volumetric workflows. Every stage — from capture to editing, lighting, and distribution — needs to be redesigned. But progress is rapid: tools like Nerfstudio are democratising access, and major companies (Google, Meta, Apple, Nvidia) are investing millions in development.

The Future: Digital Holograms and Beyond

Looking ahead, the convergence of volumetric capture, generative AI, and advanced displays promises experiences that were pure science fiction until recently. NeRF technology has already been combined with generative AI, allowing users with no modelling experience to change lighting, remove objects, or modify scenes using natural language. 3D/4D Gaussians are opening the door to text-to-3D creation: type a description and receive a complete three-dimensional model.

Education, archaeology, tourism, and journalism can all benefit: imagine virtual tours of archaeological sites, digitally “teleported” sporting event experiences, or immersive reporting from conflict zones. And as computational capabilities grow, live volumetric video streaming in VR — in real time — will no longer be a fantasy.

Volumetric video isn't merely a technological evolution — it's the beginning of an entirely new way of capturing, storing, and experiencing reality. And in the VR era, it stands at the very centre of that transformation.

Key Takeaways

- Volumetric video captures full-depth 3D data for 6 DoF VR experiences

- Microsoft Mixed Reality Capture Studios operates across 3 locations worldwide

- NeRF (2020) enabled 3D scene reconstruction from ordinary photographs

- PlenOctrees deliver 3,000× faster rendering versus the original NeRF

- 3D Gaussian Splatting trains in 35-45 minutes instead of 48 hours

- Apple, Meta, and Intel are investing in commercial volumetric video applications

- Light fields enable post-capture re-focusing and stereoscopic viewing

- Key challenges: data rates, VRAM >20 GB, lack of streaming standard

- Generative AI + NeRF/3DGS is paving the way for text-to-3D creation