Qubits are extraordinarily sensitive to noise. We need thousands of physical qubits for every logical qubit. How are engineers solving this problem?

🔬 A Single Particle of Noise Is Enough

Imagine writing a letter on paper so sensitive that a single air molecule could erase the words. That is precisely what happens in the world of quantum computers. Qubits — the fundamental units of quantum information — are nothing like classical bits that store a stable 0 or 1. They exist in superposition, an astonishingly fragile state that can be destroyed by the slightest disturbance. Thermal vibrations, electromagnetic interference, even cosmic rays can disrupt a quantum computation in fractions of a second.

This problem is not a technical accident — it is fundamental. Decoherence, the process by which a quantum system loses its quantum properties through interaction with the environment, follows the laws of physics with relentless precision. And unlike classical computers, we cannot simply copy qubits — the no-cloning theorem forbids it.

💡 Peter Shor and the First Solution

In 1995, mathematician Peter Shor at AT&T Bell Labs confronted what seemed impossible. How do you correct errors without measuring — and thereby destroying — the information? His answer was radical: instead of copying a qubit, encode the logical information into an intricately entangled state of multiple physical qubits.

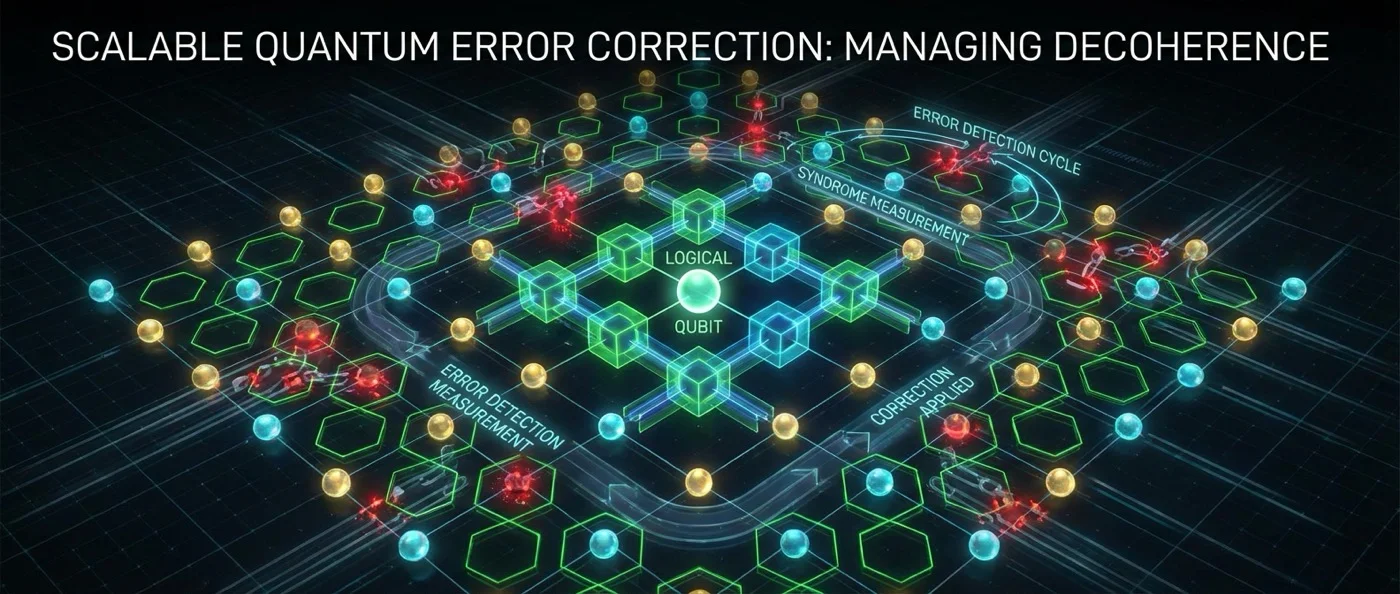

The Shor code [[9,1,3]] was the first quantum error-correcting code. Using 9 physical qubits to protect 1 logical qubit, it could detect and correct both bit-flip errors (X) and phase-flip errors (Z). The key idea: measure so-called “syndromes” — information about the errors — without ever revealing the logical state itself.

🔄 From Shor to the Surface Code

A year later, Andrew Steane at Oxford improved the method. The Steane code [[7,1,3]] needed only 7 physical qubits instead of 9, treating bit and phase errors symmetrically. Even more impressive was Raymond Laflamme's code, which proved that 5 physical qubits is the absolute minimum to protect 1 logical qubit — a limit set by the quantum Hamming bound.

But the real revolution came in 1997 from Russian mathematician Alexei Kitaev. He introduced the topological code (toric code), an entirely different approach: instead of encoding information in isolated qubits, he used the geometry of a two-dimensional lattice. The Surface Code, developed in 1998, evolved this idea and is today considered the most promising code for practical quantum computers.

Why does the Surface Code dominate? Because it requires only local stabilizer measurements — each qubit interacts only with its neighbors on the lattice. This makes it practically buildable in real hardware, especially in superconducting circuits.

🎯 The Threshold Theorem: The Great Hope

The most important discovery in the theory was the threshold theorem. It was proven independently by Aharonov and Ben-Or, by Knill, Laflamme and Zurek, and by Kitaev. It states something remarkably optimistic: if the error rate of each quantum gate remains below a critical threshold, then we can perform quantum computations of arbitrary length.

"The entire content of the Threshold Theorem is that you're correcting errors faster than they're created. That's the whole point, and that's the non-trivial thing it shows."

— Scott AaronsonFor the Surface Code, the threshold is estimated at around 1%. This means that if each physical operation fails less than 1 time in 100, we can theoretically scale computation indefinitely. At an error rate of 0.1%, an estimated 1,000 to 10,000 physical qubits are needed for every logical qubit.

⚡ The Experimental Race

For decades, quantum error correction remained a theoretical dream. The first experiment was conducted in 1998 using nuclear magnetic resonance (NMR), followed by demonstrations with linear optics, trapped ions, and superconducting qubits. But no experiment had achieved what truly mattered: making a logical qubit's lifetime exceed that of the physical qubits composing it.

In 2016, researchers achieved exactly that — for the first time. Using Schrödinger-cat states encoded in a superconducting resonator, together with a real-time feedback system, the logical qubit's lifetime surpassed that of its physical counterparts — the so-called “break-even point.”

🚀 Google, Microsoft, and the Push Forward

In February 2023, the Google Quantum AI team achieved a landmark: using the Surface Code, errors decreased as the number of qubits increased — exactly what the threshold theorem predicts. With distance-3 qubit arrays (3.028% error rate) and distance-5 arrays (2.914%), the trend was clear.

In December 2024, Google's Willow processor went further: it demonstrated a distance-7 Surface Code with a logical error rate below the threshold — the first convincing proof that scaling works in practice.

Meanwhile, in April 2024, Microsoft in collaboration with Quantinuum achieved a logical error rate 800 times better than the physical rate, creating 4 logical qubits from 30 physical ones using trapped-ion technology and real-time syndrome extraction.

⏳ Why Don't We Have Practical Quantum Computers Yet?

The numbers show why. If we need 1,000 physical qubits for every logical one, then a quantum computer capable of breaking modern cryptography — which requires thousands of logical qubits — would demand millions of physical qubits. The largest current processors have a few hundred.

But the landscape is changing fast. In January 2025, researchers at UNSW Sydney developed an entirely new approach: instead of two-state qubits, they used quantum digits (qudits) with up to 8 states, encoded in the nuclear spin of a phosphorus atom in silicon. This technique dramatically increases the information space per physical system.

The story of quantum error correction is an unending battle between the inevitable — that nature introduces errors — and human ingenuity. From Shor to Willow, progress shows that the battle can be won. Not by eliminating noise — that is impossible — but by correcting errors faster than they are created.