Quantum advantage has been demonstrated on artificial problems. When will it benefit real life: pharmaceuticals, finance, logistics?

🔮 The promise that hasn't arrived yet

In October 2019, Google's team published a paper in Nature that shook the technology world. The Sycamore processor — 53 superconducting qubits cooled near absolute zero — completed a calculation in 200 seconds that, according to Google's estimates, would require 10,000 years on Summit, then the world's most powerful classical supercomputer. John Preskill, a theoretical physicist at Caltech, had already coined the term “quantum supremacy” for precisely this moment: the moment a quantum computer would be the first to solve a problem impossible for any classical machine.

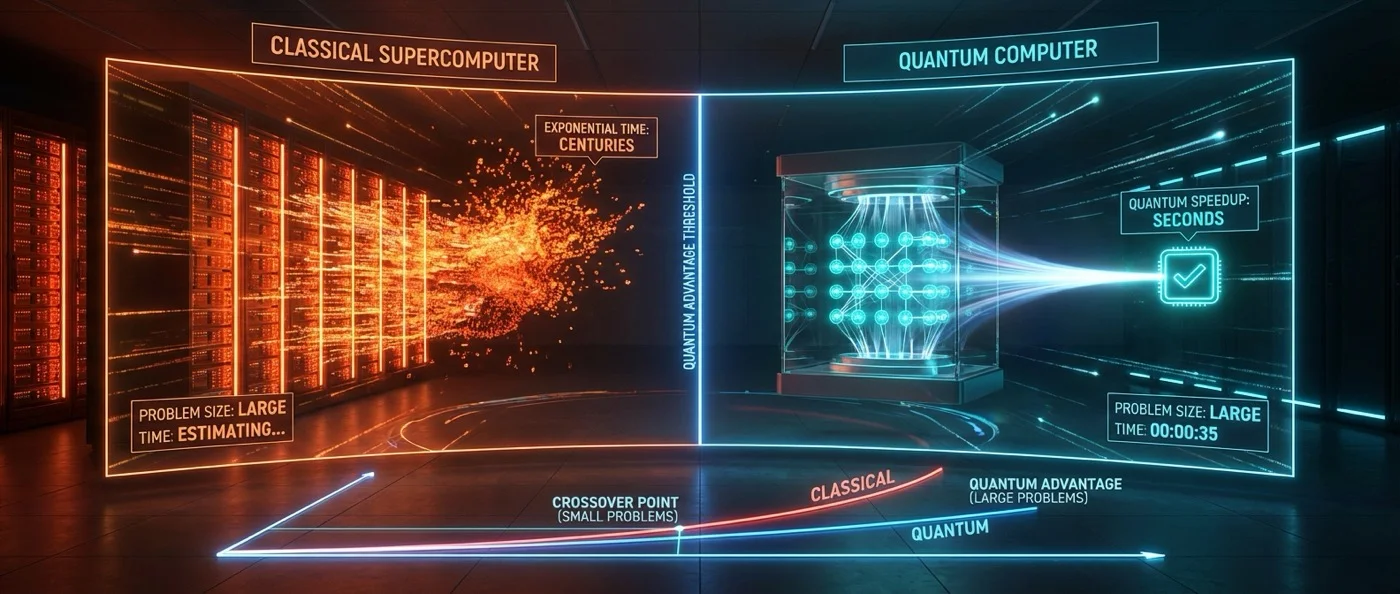

IBM responded within hours. It didn't take 10,000 years but 2.5 days, they claimed, if Summit's architecture were fully utilized — including hard drives and optimized algorithms. The dispute revealed something fundamental: the “line” between quantum and classical isn't static. Every time a quantum computer breaks a record, classical researchers improve their algorithms and narrow the gap. In 2024, Google itself acknowledged that thanks to advanced tensor network algorithms, simulating those 53 qubits now took just 6 seconds on the Frontier supercomputer.

🌍 Three supremacy claims across three continents

Google wasn't alone. In December 2020, a team at the University of Science and Technology of China (USTC), led by Pan Jianwei, built Jiuzhang: a photonic quantum computer that performed Gaussian boson sampling on 76 photons. The team claimed a classical supercomputer would need 2.5 billion years to produce the same samples. A year later, Jiuzhang 2.0 detected 113 photons — an increase of 10 orders of magnitude in computational speed.

In parallel, Zuchongzhi, a superconducting quantum processor from USTC, used 56 qubits with random circuit sampling — 3 more qubits than Sycamore, raising the computational cost of classical simulation by 2-3 orders of magnitude. In June 2022, Canada's Xanadu reported a boson sampling experiment with up to 219 photons, claiming a speedup of 50 million times compared to previous experiments.

However, each of these claims concerned problems with no practical value. Random circuit sampling and boson sampling are mathematically interesting but don't design drugs, don't optimize supply chains, and don't break encryption.

⚠️ Why practical advantage is delayed

In May 2023, Nature published a special feature with a title that said it all: “Quantum computers: what are they good for?” — and the short answer was “for now, absolutely nothing.” That same year, an analysis in Communications of the ACM by Torsten Hoefler, Thomas Häner, and Matthias Troyer concluded that current quantum algorithms are "insufficient for practical quantum advantage without significant improvements across the entire hardware/software stack."

The obstacles are multilayered. First, classical computers — especially GPUs — continue to improve rapidly. Each new GPU generation raises the bar a quantum computer must clear. Second, today's quantum machines produce only limited amounts of entanglement before noise takes over. Third, even where theoretical advantages exist — Shor's algorithm for RSA decryption, Grover's algorithm for database search — practical implementation demands resources that don't yet exist. The analytical estimate: at least 3 million physical qubits are needed to break RSA-2048 in 5 months, even on a fully error-corrected trapped-ion system.

"The number of continuous parameters describing the state of such a useful quantum computer at any given moment must be around 10300. Could we ever learn to control that many parameters? My answer is simple: no, never."

— Mikhail Dyakonov, IEEE Spectrum, 2018💡 Where hope lies

The Hoefler-Häner-Troyer analysis identified the most promising candidates for practical quantum advantage in “small data” problems: chemistry and materials science challenges where the quantum nature of molecules themselves makes classical simulation exponentially hard. Richard Feynman had articulated this exact intuition in 1982: "Nature isn't classical, dammit, and if you want to simulate nature, you'd better use quantum mechanics." Seth Lloyd formally proved the claim in 1996.

In practice, the pharmaceutical industry sees quantum computing as a drug discovery tool. Simulating proteins and small molecules — critical for drug design — is an inherently quantum problem. In August 2020, Google researchers used Sycamore for the largest chemical simulation on a quantum computer at that time: a Hartree-Fock approximation with 12 qubits. In 2023, the company Gero used a hybrid quantum-classical generative model based on a Boltzmann machine running on a D-Wave annealer to create novel drug-like molecules.

In December 2024, Google unveiled the Willow processor — a milestone in reliability. For the first time, a logical qubit had a lower error rate than the physical qubits comprising it. This is considered a crucial step toward fault-tolerant quantum computing — the kind that can actually scale.

🏭 Four sectors in the crosshairs

From a business perspective, potential quantum computing applications fall into four categories: cybersecurity (both breaking and strengthening encryption), data analytics and artificial intelligence (the HHL algorithm for linear systems, quantum machine learning), optimization and simulation (logistics, financial modeling, materials design), and data management.

But the same 2023 ACM analysis warns: for “big data,” unstructured linear systems, and Grover-type database searches, input/output (I/O) constraints make quantum speedup unlikely. Loading classical data into a quantum state is so slow it negates any computational speed gain. Grover's algorithm, while offering a quadratic speedup in theory, has never demonstrated practical superiority in any real scenario.

⏳ When will things change?

No one can answer with certainty. Mathematician Gil Kalai questions whether true quantum supremacy will ever be achieved, arguing that noise in scaled quantum systems is fundamentally unsolvable. Physicist Mikhail Dyakonov maintains that the number of parameters in a useful quantum computer exceeds any possibility of control. On the other hand, progress in error correction — especially Willow and new approaches using low-density parity-check codes and cat qubits — shows that 100 logical qubits with 768 cat qubits could reduce errors to 1 per 108 cycles.

Today's reality lies somewhere in between: quantum computers in the NISQ era (Noisy Intermediate-Scale Quantum) don't yet solve any practical problem faster than classical ones. But every milestone — Sycamore's supremacy, Jiuzhang's photonic power, Willow's reliability — narrows the gap separating theory from practice. The question isn't if, but when.