Quantum neural networks and quantum learning algorithms. Where does real quantum advantage exist in AI, and where is it still a theoretical scenario?

🧠 The Day a Quantum Computer “Understood” an Image

In September 2017, a paper was published in Nature that would change how we think about the relationship between quantum computing and artificial intelligence. Jacob Biamonte, Peter Wittek, Nicola Pancotti, Patrick Rebentrost, Nathan Wiebe, and Seth Lloyd presented a systematic review titled “Quantum Machine Learning,” mapping the entire field for the first time. The central idea was radical: if quantum computers can manipulate exponentially large Hilbert spaces, why not use them to accelerate machine learning algorithms?

But the story began much earlier. As far back as 1995, Subhash Kak and Ron Chrisley independently published the first ideas about quantum neural networks, inspired by the theory of quantum mind — the hypothesis that quantum phenomena play a role in human cognitive function. Back then, the idea seemed almost like science fiction. Today, we find ourselves in the midst of a feverish research activity.

🔬 How Does a Quantum Machine Learn?

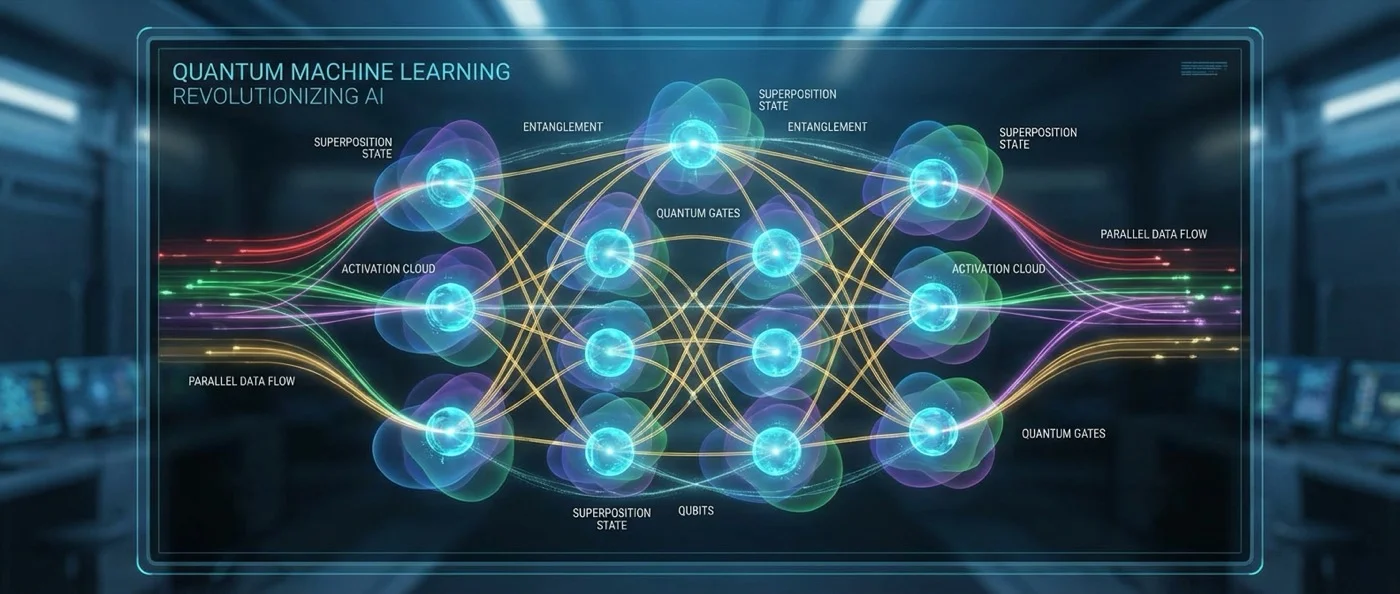

Classical machine learning relies on neural networks, gradient descent, and backpropagation. The quantum version exploits two fundamental principles: superposition and entanglement. A qubit can exist simultaneously in states |0⟩ and |1⟩, and this enables something called amplitude encoding: a system of n qubits can store 2n probability amplitudes — an exponentially compressed data representation.

On this foundation was built the HHL algorithm (Harrow–Hassidim–Lloyd, 2009), the first quantum algorithm to promise exponential speedup for solving linear systems of equations — a problem central to many machine learning applications, from regression analysis to recommendation systems. Seth Lloyd's team at MIT extended this logic by creating quantum PCA (Principal Component Analysis, 2014), while Rebentrost, Mohseni, and Lloyd introduced quantum SVM (Support Vector Machine, 2014) — algorithms that could theoretically analyze terabytes of data in logarithmic time.

🌐 The Google–NASA Lab and the Dawn of Quantum AI

In 2013, Google Research, NASA, and the Universities Space Research Association took a bold step: they established the Quantum Artificial Intelligence Lab, installing a D-Wave quantum computer at the Ames Research Center — the first commercially available quantum annealer. The goal was to explore how quantum annealing could train machine learning models, particularly Boltzmann machines and deep neural networks.

Research with the D-Wave yielded mixed results. On one hand, teams managed to train probabilistic generative models that recognized handwritten digits, according to the publication by Benedetti, Realpe-Gómez, Biswas, and Perdomo-Ortiz (2017). On the other hand, the question of whether this constituted genuine quantum advantage or merely an alternative implementation remained open.

⚙️ Variational Quantum Algorithms: The Hybrid Path

The most realistic path for quantum AI in the NISQ (Noisy Intermediate-Scale Quantum) era is variational quantum algorithms (VQAs). The architecture is hybrid: a classical computer optimizes the parameters of a quantum circuit, while the quantum computer performs the actual state preparation and measurement. This logic underpins the Variational Quantum Classifier and Parameterized Quantum Circuits (PQCs), which function analogously to classical neural networks but on quantum hardware.

In 2019, Iris Cong, Soonwon Choi, and Mikhail Lukin introduced Quantum Convolutional Neural Networks (QCNNs) — an architecture that mimics classical CNNs but uses quantum gates as convolution filters. The idea had elegance: each “layer” of the QCNN measures and discards qubits, progressively reducing dimensions — exactly like pooling layers in a classical CNN.

⚠️ The Barren Plateau Problem

If there is one point where quantum machine learning hits a wall, it is the barren plateau problem. In 2018, Jarrod McClean along with Sergio Boixo, Vadim Smelyanskiy, Ryan Babbush, and Hartmut Neven (all at Google) published a landmark paper in Nature Communications: “Barren plateaus in quantum neural network training landscapes.” They demonstrated that as the number of qubits increases, the gradients in the optimization landscape vanish exponentially. In practice, this means the training algorithm gets “lost” in an endless plain with no direction — hence the image of a “barren plateau.”

This was a dramatic finding. If you cannot train the circuit, you cannot do anything. For years, researchers tried architectures through trial-and-error, without theoretical guarantees they would work. The situation changed in 2024–2025, when researchers at Los Alamos National Laboratory provided the first mathematical characterizations of when and why barren plateaus appear, offering theoretical guarantees for which architectures remain trainable as they scale.

⚖️ Real Capabilities or Exaggerated Promises?

Quantum machine learning is not merely theory. Recent studies on quantum kernel methods — a technique that maps data into quantum Hilbert space and classifies them using classical algorithms — have shown encouraging results. A major benchmarking study examining over 20,000 trained models (Schnabel & Roth, 2025) revealed universal patterns that guide effective quantum kernel design. Additionally, quantum generative models for tabular data achieved an 8.5% improvement over leading classical models — using only 0.072% of the parameters (Bhardwaj et al., 2025).

However, the picture is not monochrome. As several analyses point out, classical computers — and especially GPUs — continue to improve rapidly. Current quantum computers produce only limited entanglement before noise overwhelms them. And, more importantly, several quantum speedup promises concern theoretical worst-case scenarios that do not necessarily translate into practical superiority. Maria Schuld and Nathan Killoran posed the explicit question in 2022: “Is Quantum Advantage the Right Goal for Quantum Machine Learning?” — suggesting that perhaps quantum machine learning should be evaluated by different criteria.

🔮 The Three Frontiers of the Future

Quantum artificial intelligence is evolving on three main fronts. First, quantum-enhanced generative models for drug discovery and molecular design — a domain where quantum chemistry and machine learning converge naturally, since molecules are inherently quantum objects. Second, explainable quantum ML (XQML), a new field developing interpretability tools — such as quantum Shapley values — to understand exactly what a quantum circuit “learns.” Third, quantum reinforcement learning, where agents train in quantum environments using superpositions of states.

The truth is that nobody yet knows whether quantum machine learning will truly change the foundations of AI. What we do know is that the combination of quantum physics and machine learning is creating a new research field that is already generating pioneering ideas — even if their full implementation requires quantum hardware that does not yet exist. As Feynman wrote in 1982: “Nature isn't classical. If you want to simulate it, make the computer quantum.” Perhaps the same logic applies to intelligence itself.